In the previous post, we created the required Azure resources. In the last step of the previous post, we created Azure SSIS IR which is basically responsible for creating SSISDB in the Azure SQL Server where we’ll deploy the SSIS package.

In this demo, we are going to execute a SSIS package which will load the data from source table ([SalesLT].[Customer]) to the destination table([dbo].[Customer]). So, let’s create the Customer table in destination database with the same schema as that of Customer table in source database using the below script.

CREATE TABLE dbo.[Customer](

[CustomerID] [int] NULL,

[Title] [nvarchar](8) NULL,

[FirstName] nvarchar(100) null,

[MiddleName] nvarchar(100) NULL,

[LastName] nvarchar(100) null,

[Suffix] [nvarchar](10) NULL,

[CompanyName] [nvarchar](128) NULL,

[SalesPerson] [nvarchar](256) NULL,

[EmailAddress] [nvarchar](50) NULL,

[Phone] nvarchar(20) NULL,

[PasswordHash] [varchar](128) null,

[PasswordSalt] [varchar](10) null,

[rowguid] [uniqueidentifier] null,

[ModifiedDate] [datetime] null

)

Once done, let’s create one SSIS package using SSDT which will load the data from [sourcedev].[SalesLT].[Customer] to

[destinationdev].[dbo].[Customer].

After creating the package, let’s deploy the package from SSDT to the SSISDB database using Deployment Wizard. In Solution Explorer, Right Click on the Project and Click Deploy.

Fill the Azure SQL Server name, credential and click on Connect. Then Select the Path where we need to deploy the package. We have created a folder DEV where we are going to deploy the project.

Click on Deploy.

Once the deployment is successful, Click on Close.

After deployment, let’s verify the package has been deployed at the correct location using SQL Server Management Studio(SSMS).

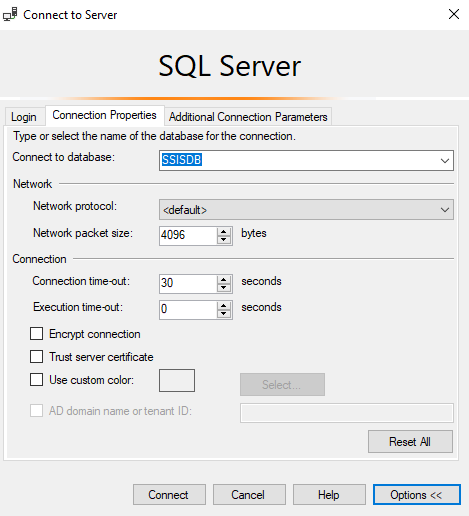

Fill Server name, credential and click on Options to go to Connection Properties Tab.

Type SSISDB(in uppercase as it’s case sensitive) in the Connect to database and click on Connect.

We should be able to see Integration Services Catalogs node –> SSISDB followed by the path <Folder Name>–> Projects –> <Project Name> –> Packages –> <Package Name> where we had deployed the package using Deployment Wizard.

NOTE: Integration Services Catalogs node will be visible if we type SSISDB in upper case. More details here.

After verifying that the SSIS package has been deployed successfully, let’s try to execute it using Azure Data Factory.

Browse through the ADF created in the previous post. Click on Author & Monitor.

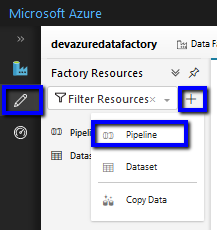

Click on Author icon on the left –>Click + –> Pipeline

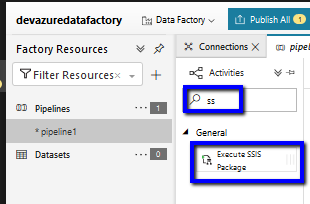

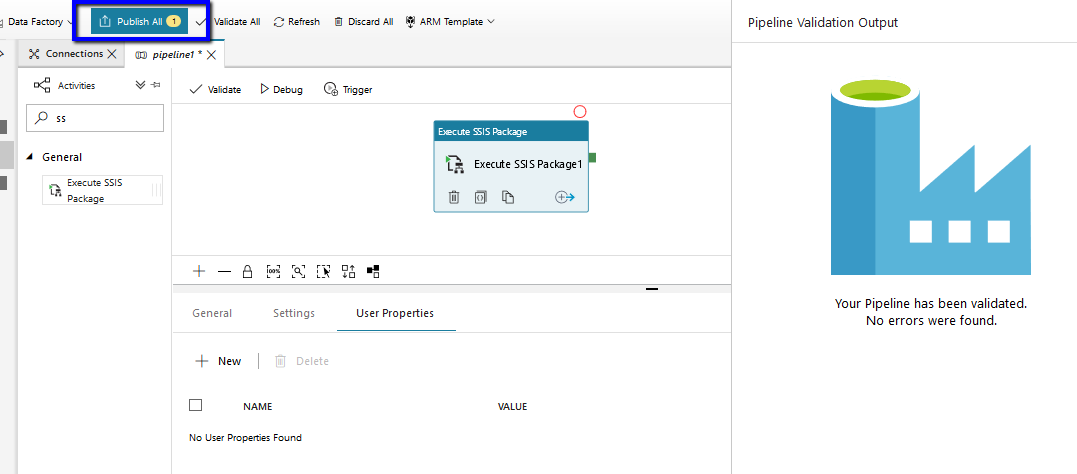

Select the pipeline –> Search for “SSIS” in the Activities textbox–> Drag Execute SSIS Package Activity to the canvas.

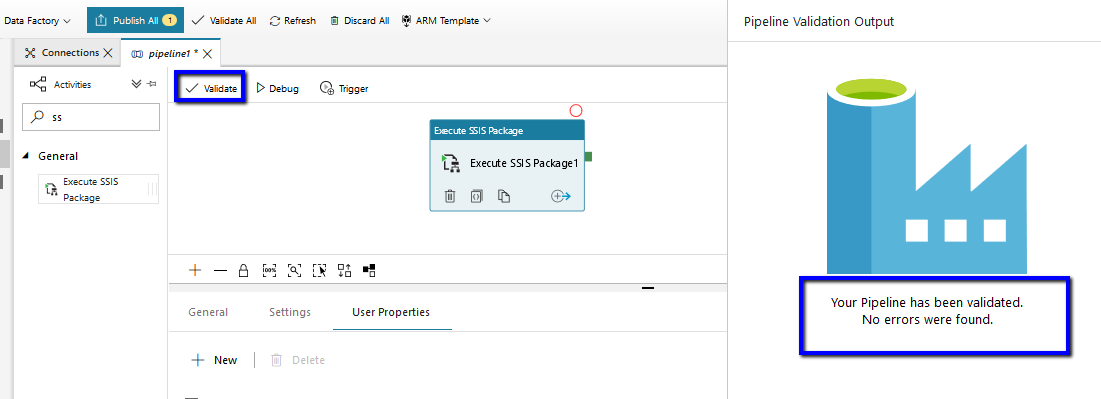

Select the Activity Execute SSIS Package on the canvas –> Go to Settings Tab –> Select the Azure SSIS IR we had created in the previous post –> Provide the Path where the SSIS package has been deployed on SSISDB.

Then Click Validate to ensure there are no errors. No errors were found message will appear on the right if there’s no error found during validation.

On successful validation, Click Publish All

Now that we have completed all the steps, let’s trigger the pipeline to see the result.

Click on Trigger Tab –> Trigger Now

Click Finish.

Click on Monitor icon on the left –> Go to Pipeline Runs Tab –> Refresh to see the result. We can see that it has succeeded.

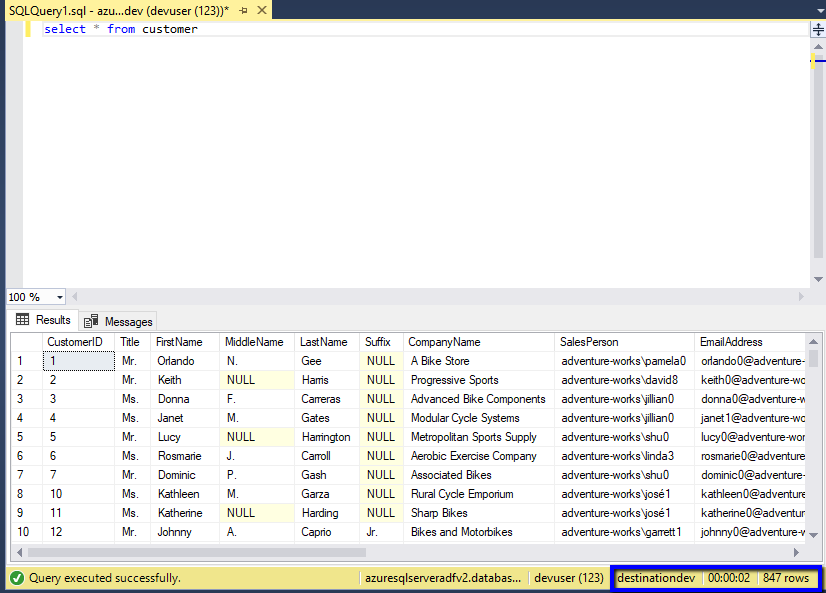

Let’s verify the result. In source database and destination database, in Customer table, we have same data with exact number of records.

So, in this part of the post we saw how to deploy the SSIS package to SSISDB and execute it using Azure Data Factory.

Hope it helps !!

Reblogged this on Nishant Rana's Weblog.

LikeLike